🎙️ Can you tell if that voice on the phone is real or AI? Your dialect might be clouding your judgment.

Fascinating new research reveals a hidden vulnerability: we’re significantly more likely to assume AI voices are human when they speak in regional, minority, or non-standard dialects.

✨ Why? Because we’ve been conditioned to believe AI can’t authentically replicate these “underrepresented” language varieties. Researchers call this the MINDSET bias (Minority, Indigenous, Non-standard, & Dialect-Shaped Expectations of Technology).

Here’s what’s counterintuitive: Your accent might make you more vulnerable to AI voice scams—but a simple nudge could change that.

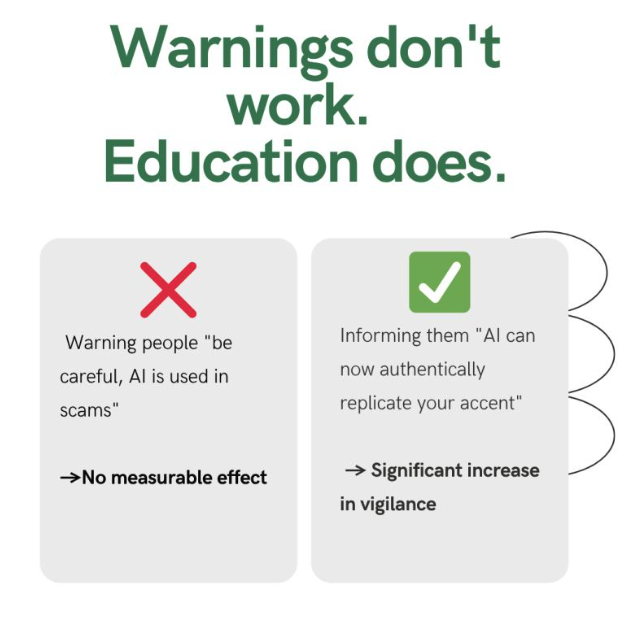

❌ Warning people “be careful, AI is used in scams” → No measurable effect

✅ Informing them “AI can now authentically replicate your accent” → Significant increase in vigilance

The study tested Scottish dialect speakers and found that simply updating people’s expectations about AI capabilities reduced their tendency to default to “that’s a real person” by a meaningful margin.

✨ The takeaway for organizations: Traditional security warnings aren’t enough. If you’re developing fraud prevention campaigns or security protocols, focus on updating what people think AI can do, not just warning them about what criminals might do.

As AI voice technology becomes more sophisticated, communities whose language varieties have been historically excluded from tech systems may face greater risk—unless we actively work to update those outdated assumptions.

Paper by Neil Kirk at Abertay University, check it out here → https://lnkd.in/dgTTgYFB

What do you think—have you ever caught yourself thinking that standard American speech was fake while a dialect “must be” real? 👇