Think you could spot an AI voice clone? Spoiler: You probably can’t. And neither can I.

I’ve read a paper by Sarah Barrington, @Emily A. Cooper & Hany Farid from UC Berkeley and…

Well, in a nutshell, we are surprisingly bad at detecting AI-generated voice clones, both in terms of recognizing if it’s the same person speaking and determining if a voice is real or fake.

Researchers created AI voice clones of 220 people using ElevenLabs (the same tool used in the fake Biden robocall). Then they run two two studies:

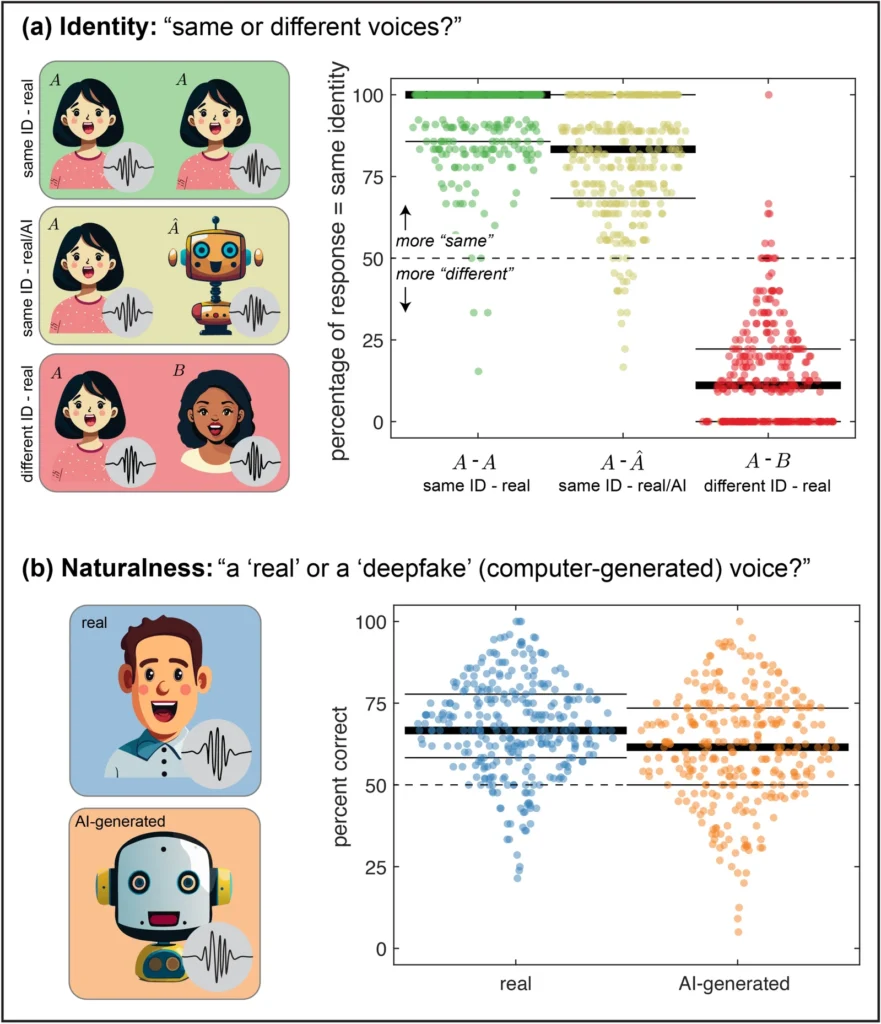

Study 1: Identity Detection “Can people tell if two voices are the same person?”

→ When participants heard a real voice paired with its AI clone, they thought it was the same person 80% of the time

The AI clones were highly convincing at mimicking someone’s identity.

Study 2: Real vs. Fake Detection “Can people tell if a voice is real or AI-generated?”

→ Real voices correctly identified: 67% → AI voices correctly identified: 61%

These numbers are barely better than random guessing (50%).

What Actually Helps Detect AI Voices?

✅ Longer audio clips and unscripted dialogue

✅ Focusing on speech pace and accents

❌ Background noise and breathing sounds (unreliable)

What Does This Mean for Technology?

The researchers suggest watermarking AI-generated audio. But this only works if ALL platforms adopt it. Scammers would simply migrate to platforms without watermarks. Plus, any real-time monitoring raises serious privacy concerns and would need near-perfect accuracy to avoid false alarms.

❓ AI detection tools are everywhere, but this study reveals a harder truth: detection alone isn’t enough. How do we ensure AI generators build security IN from the start—not just bolt it on later? Is mandatory watermarking the answer, or do we need something more robust?

📄 Read the full study: https://lnkd.in/dHC8aymB