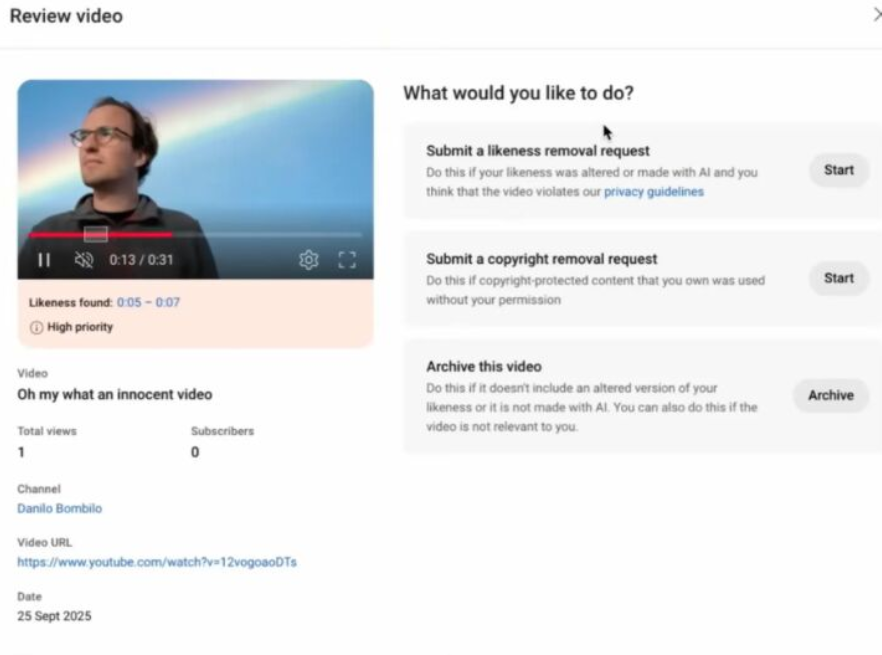

YouTube revealed that its likeness-detection technology has officially rolled out to eligible creators in the YouTube Partner Program, following a pilot phase. The technology allows creators to request the removal of AI-generated content that uses their likeness—identifying and managing AI-generated content featuring their face and voice.

👋 Kinda related to my narrative about deepfake detection, protecting our image, how all of it is hitting creators…

And now, on first look, it looks cool—companies are creating something to protect creators.

🤡 Hang on a minute. The downside though: YouTube, as a company, wants people to fill a massive database with our IDs, personal information, and facial details from every angle. It’s optional, and the appeal of having more control over personal protection is understandable, but I can’t imagine a scenario where this tool wouldn’t be abused.

🧐 The fact that they’re asking creators to voluntarily hand over biometric data and government IDs—and framing it as empowerment—feels deeply problematic.

🤯 Nothing says “we’ve got your back” like asking for your face, voice, and government ID.

While it’s supposedly designed to protect users from AI-generated impersonations, it creates far more potential for harm than protection.

❓ Any creators in my network—what’s your take? Protection tool or privacy nightmare?

I was really considering getting out there and starting to create videos – I’m way more fun live than in text – but stuff like this and other concerns (like people being mean online to each other) really hold me back.

Guess I’ll stick to deepfake detection instead of becoming the deepfake. 😬